“Generative adversarial network” and “deep convolutional neural network”: the terms sound daunting, but behind them lies potential for AI to positively impact human lives.

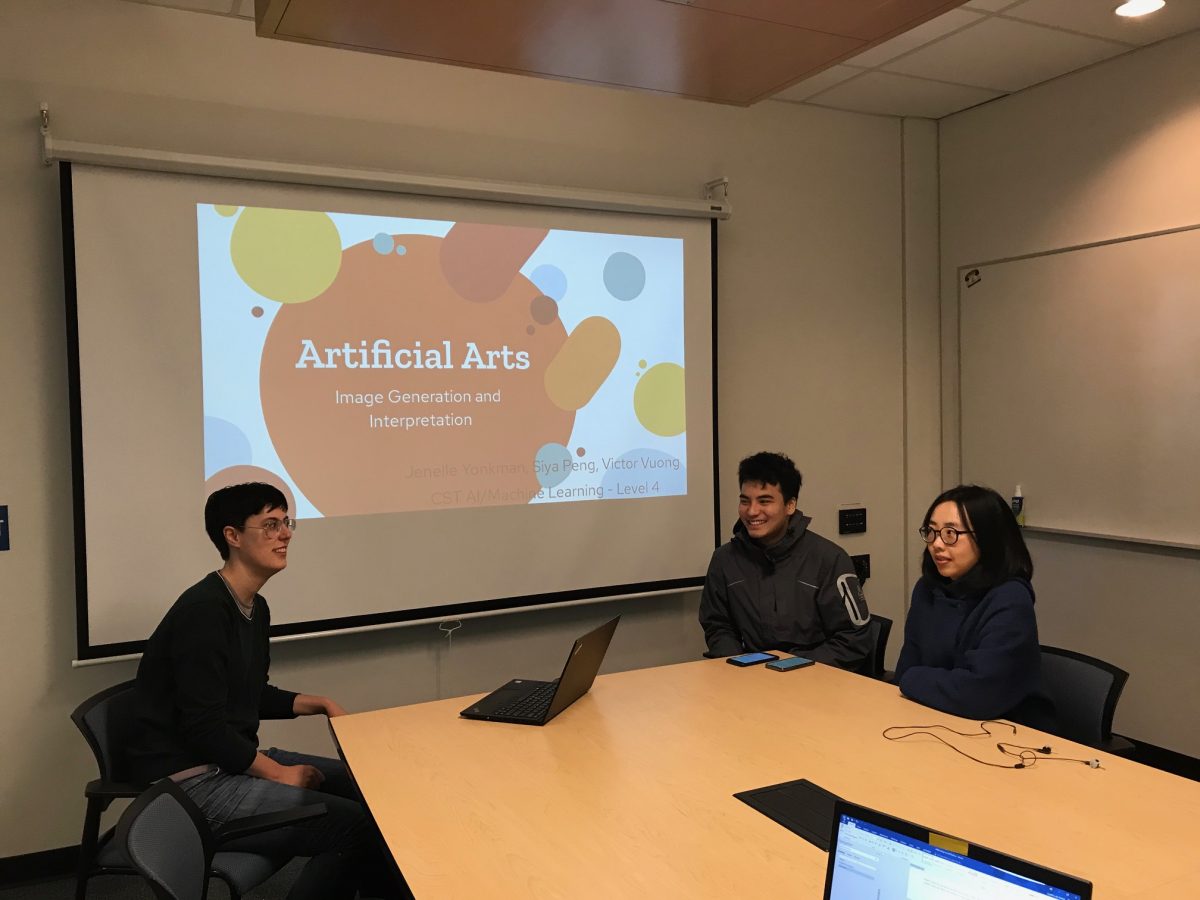

The BCIT class of 2019 Artificial Intelligence and Machine Learning (AIML) Option students in the Computer Systems Technology Diploma program recently wrapped a year of specialty study with their project demos.

Combining datasets for insight

Led by Option Head Dr. Chi En Huang, students took on public datasets from the City of Vancouver Open Data Portal, and chose an angle to tackle with AIML. They were tasked with developing a machine learning solution that could use the various datasets to answer questions, create meaning, form insights, and inspire action.

“Defining a use case is the first step in a project like this, maybe also the hardest. That is, what can we try to gain from this data?” explains Chi En. “Then the next issue is that real-world data is often not very clean, providing additional challenges. But these struggles, and the overall process, is critical to learning, even if results don’t materialize.”

From aggregating multiple datasets for a land value predictor, to parking infraction insights, students delved into their data over the three-week project.

BCIT now offers Topics in Computer Programming – Artificial Intelligence, a Part-time Studies course that provides an introduction to the various topics in AI. Scholarships for women interested in AI may be available through AthenaPathways.org.

AI-Generated art: Inexpensive but still appealing?

For one team, inspiration came from the City’s database of public art.

Siya Peng, Victor Vuong, and Jenelle Yonkman decided to try to see what AIML could learn from identification and reproduction of art. They aimed to make art more accessible by generating new art at virtually no cost, and thus enrich city life.

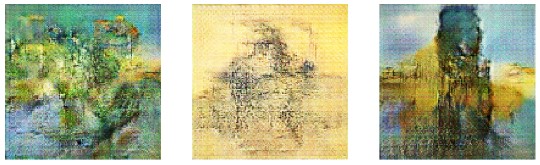

Their model used the “Generative Adversarial Network” where an AI “generator” creates art for an AI “discriminator”, which classifies art. Art results when the generator creates something that fools the discriminator into labelling it as real art, rather than AI-generated.

The team trained their AI using Vancouver’s database of public art, as well as art by celebrated artists like Van Gogh, Picasso, and Da Vinci. The AI examines the real art, pixel by pixel, to “learn” from it.

They also had the AI create a description of its resulting art. Some of it was quite funny to the team, often with extreme duplication of words, and sometimes more convoluted gaffes.

“We frequently use Machine Learning for practical applications like screening resumes. But it often amplifies biases we already have,” says Jenelle Yonkman, while discussing some of the broader insights of this part of the project.

“We can see this in our project: the AI repeats the same words. This is a more explicit way of showing how AI can strengthen things we’ve already done. In resume-screening, if you phrase things differently, you might never even be considered for the job.”

“[AI] often amplifies biases we already have” ~Jenelle Yonkman, CST Student

Aircraft maintenance: Can AI reduce time needed to detect defects?

Another team, comprising Steve Cho, Andy Tang, and Jeffery Wasty, investigated the feasibility of an image-based aircraft defect detection program.

They were interested in reducing aircraft examination times, and had special insight as Andy had experience in aircraft maintenance. This kind of unique blended expertise is often what leads to insights in how computing, and AI specifically, might be applied to help society.

“I spent a lot of time looking for aircraft defects, and thought it would be really good if computing could help,” explains Andy.

Interdisciplinary opportunity

With cross-discipline connections through Geomatics faculty member, and drone expert, Dr. Eric Saczuk, and data from Spexi Geospatial, the team gained access to a dataset of aircraft images taken by drones. Since a drone can take images of all aircraft surfaces, the collaborators hoped it could potentially accelerate problem identification compared to a human Aircraft Maintenance Engineer (AME).

“This is a huge step forward in interdisciplinary collaborative work” ~Eric Saczuk, Geomatics Instructor

They sought to use AI to classify the images as defect, or non-defect.

While the team made progress, the AI struggled to recognize the difference between cracks (defect) and normal airplane joints or screws (non-defect), when trained with only a limited amount of image data. They had to use 90% of the available data to train the system how to correctly classify possible defects.

“You are what you eat – it’s the same with machine learning,” explains the team. “You really need an ample amount of data, different types, correctly labelled, of similar complexity.” The machine learning model can be improved with new inputs and a larger training sample size.

“We’re definitely not replacing the human with this model yet, but you can see the potential,” says Andy.

“This is a huge step forward in interdisciplinary collaborative work,” adds Eric.

“We’re definitely not replacing the human with this model yet, but you can see the potential” ~Andy Tang, CST Student

Sign up for our quarterly Tech It Out newsletter and keep up with the latest from BCIT Computing.